Uncompressed 8K Ultra-HD Video Transmission Experiment ―8K Ultra-HD Video Processing System ―

Kanagawa Institute of Technology is conducting research into 8K Ultra-High Definition(8K UHD) video processing systems. We spoke with Mitsuru Maruyama and Katsuhiro Sebayashi, both professors at the Department of Information Network and Communication of the Faculty of Information Technology, Kanagawa Institute of Technology about the main points and results of the research and the role played by SINET 5.

(Interview date: November 10, 2020)

Could you start by explaining the purpose of research into 8K UHD video processing systems?

Maruyama: Our lab is conducting research into uncompressed 8K UHD video transmission technology. The research was prompted by the question of whether it is possible to realize an 8K UHD program production environment using cloud-based infrastructure. Even when you are watching a TV program, there are often scenes with overlays such as special effects and superimposed text inserted on top of real video images. Since 8K UHD video involves vast amounts of data, any attempt at this kind of processing takes an enormous amount of time. And so we wondered whether it would be possible to accelerate 8K UHD video editing in the cloud. Ultra high-definition 8K video has huge potential in other areas besides broadcasting such as the healthcare field and so we hoped to be able to realize an environment that will help doctors carry out diagnosis remotely.

What type of technologies are required to achieve this?

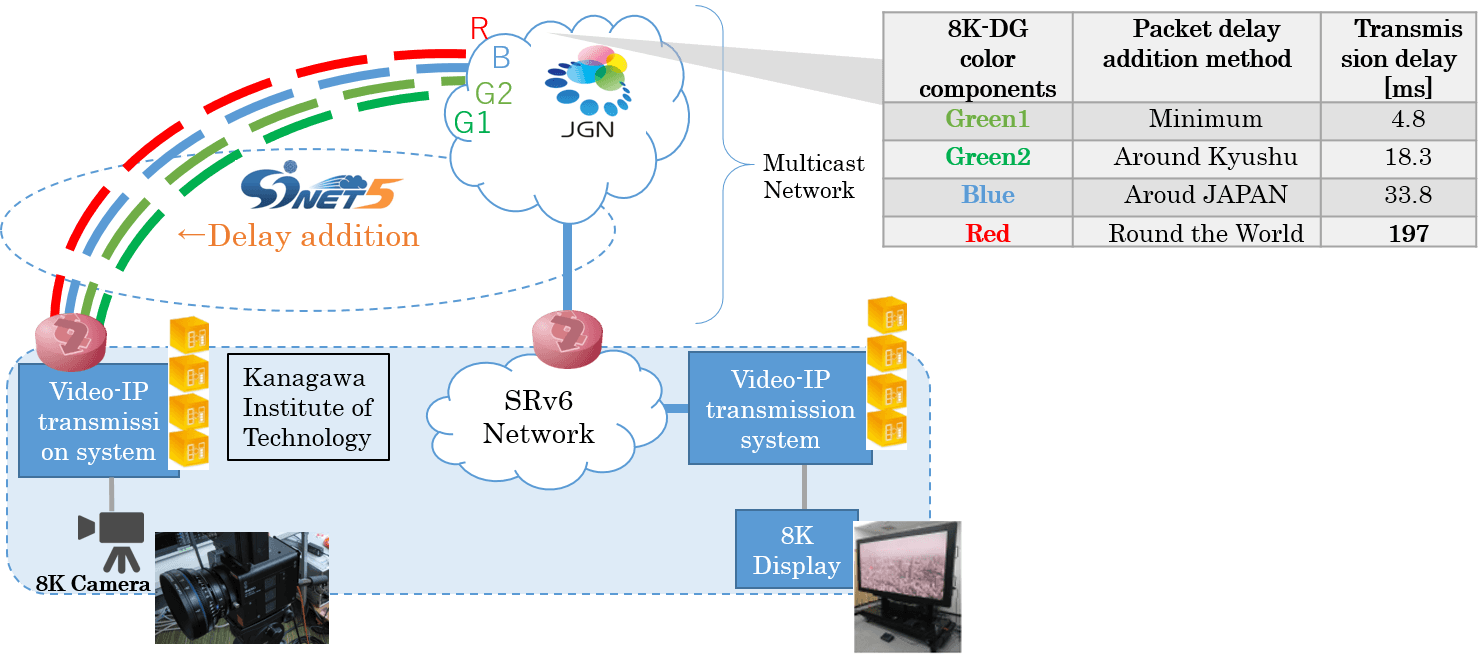

Maruyama: One is video processing platform technology which enables real-time processing through edge-to-cloud integration and another is network cloud control technology for high-precision monitoring and virtual network control. These two technologies used together will make it easier to work with 8K UHD video. We use SINET and JGN to carry out the demonstration experiment but, at the end of the day, delivering 8K UHD requires a very high bitrate. Which is why Kanagawa Institute of Technology has a SINET5 100 Gbps connection.

Sebayashi: Just to add if I may, “cloud” in this context refers to distributed computing resources such as NFV(Network Function Virtualization), which we will explain later, and “edge” refers to the aggregation points for data delivery to end users. The latter in particular is probably easier to understand if you imagine something like an NTT station building.

Could you also tell is about your research progress to date?

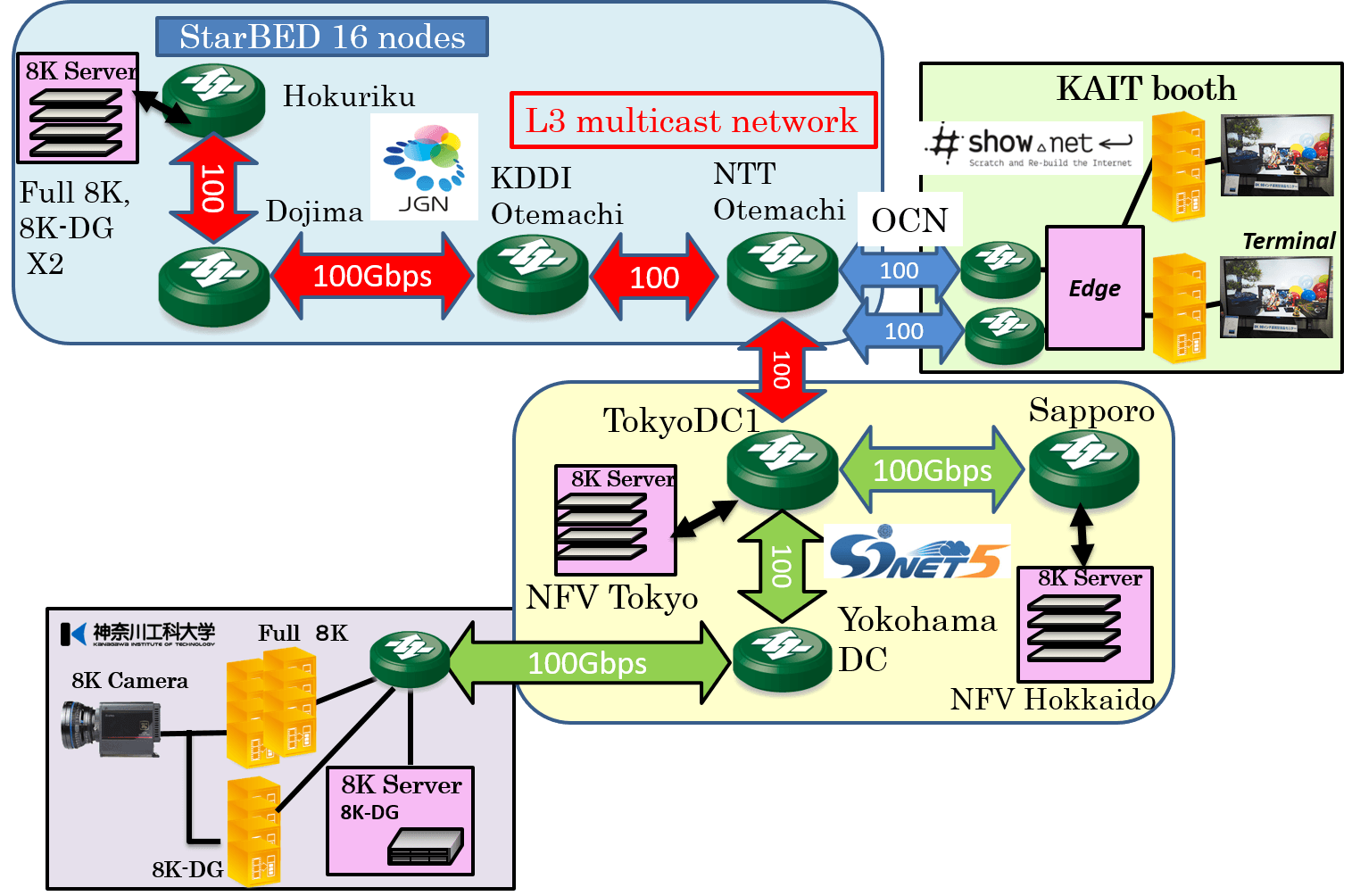

Maruyama: Firstly, in terms of transmission technology, we succeeded in accomplishing the world’s first transmission of 8K UHD uncompressed video streaming in 2014. We subsequently achieved 8K UHD uncompressed video transmission with real-time encryption in 2016, the word’s first transmission of uncompressed 8K UHD video over a 109 Gbps Ethernet path in 2017, and the streaming of 3D videos with full resolution non-compressed 8K UHD videos in 2020. As far as these initiatives are concerned, the concept is simple and a feat of strength, involving using more transmission equipment and transmitting the data in parallel (laughs). Once transmission was possible, the next challenge was video data storage, and in 2015, we added a virtual 8K UHD-DG server to the StarBed test bed environment provided by NICT. Later, in 2017, we added a server that could support 8K UHD full-resolution video and we are now using a group of NFV servers provided by NII to try and build a distributed 8K UHD video server system.

What do you aim to achieve in building a distributed 8K UHD video server system?

Maruyama: The aim is to realize 8K UHD live streaming servers using unused VMs (virtual machines) in the cloud. In the cloud, there are many VMs which are not being used. If we could allocate resources to these, we might be able to significantly improve system costs and flexibility. Of course, we would need to establish various methodologies to enable this, but we thought we would give this a try using NFV servers provided by NII. This latest test environment is made up of six VMs at the Sapporo DC and two VMs at the Tokyo DC, with a bandwidth per VM of 3Gbps, making a total bandwidth of 24Gbps. Through this experiment, we gained knowledge for the realization of smooth video transmission over a distributed server system as well as tuning methods.

What was the next step?

Maruyama: The next challenge after transmission and storage was, of course, the incorporation of processing and we attempted high speed video switching using edge-to-cloud integration technology. In the event of traditional video switching using IP multicast, for example, the video ends up cutting out in less than 10 seconds. Since this is no good for professional applications, we came up with a method of edge switching using DPDK (Data Plane Development Kit). By incorporating service chaining in addition to this, we are attempting to build a platform that will enable easy integration of video processing features such as video switching, media synthesis and separation, and lag adjustment.

I’ve heard your students are also actively involved in such demonstration experiments and research, right?

Sebayashi: Since networks are now part of the infrastructure of enterprises and universities, the days when users had easy access are gone. I also feel as though, as a result, there are more people who have no basic knowledge. We have adopted education focusing on practical skills and support for people to gain qualifications as key educational policies and so we are eager to let students get involved in demonstration experiments if they want to and to learn new skills through hands-on experience. Incidentally, our students also lay fiber optic cables themselves and so know everything about the roofspace and vertical shaft structure of the Faculty of Information Technology building (laughs).

This sounds like it will be a very valuable opportunity for students.

Sebayashi: Our research is based on collaboration with enterprises such as NTT Group companies and research institutions such as NII and NICT. Since our students have gone to the trouble of getting themselves here, I want them to learn as much as they can from professionals in each field and be inspired. We are often in contact with NII in particular, making various requests in connection with our experiments and even when our students get in touch, NII deals with them directly. The professors who are engaged in the joint research also give our students all kinds of advice, which I am extremely grateful for.

Could you also tell us about the role played by SINET5?

Maruyama: We use a 100 Gbps connection for our experiments, which is separate from our infrastructure connection but it would be impossible for us to provide a network that could support such a high bandwidth over a large area ourselves. In the case of the 8K UHD video transmission experiment in Sapporo Snow Festival, we created a network environment connecting Hokkaido, Tokyo, Osaka, Okinawa and Kanagawa Institute of Technology, but this would not have been possible without SINET. For our research, SINET is absolutely essential.

Sebayashi: What is more, SINET5 also connects us to scientific networks around the world such as Internet2 and GÉANT. In the future, the streaming of videos from all kinds of overseas research institutions including observatories is also conceivable.

In your view, what are the prospects for the future?

Maruyama: So far, we have focused on uncompressed 8K UHD video streams in 24G/48G bandwidth, but next we would like to transmit full-spec 8K UHD videos. In this case, the rate of transmission will increase to as much as 144 Gbps and so we have our hopes pinned on next-generation SINET. We would also like to try sending uncompressed 8K UHD videos to AIST’s AI machines to get them to learn in real time.